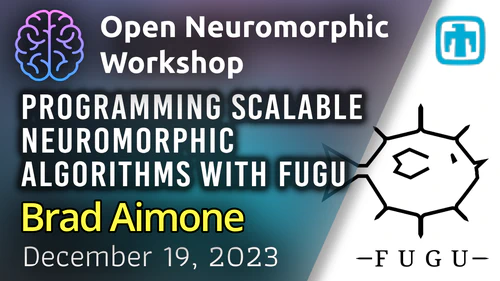

Biological cortical neurons are remarkably sophisticated computational devices, temporally integrating their vast synaptic input over an intricate dendritic tree, subject to complex, nonlinearly interacting internal biological processes.

With the aim to explore the computational implications of leaky memory units and nonlinear dendritic processing, we introduce the Expressive Leaky Memory (ELM) neuron model, a biologically inspired phenomenological model of a cortical neuron. Remarkably, by exploiting a few such slowly decaying memory-like hidden states and two-layered nonlinear integration of synaptic input, our ELM neuron can accurately match the aforementioned input-output relationship with under ten-thousand trainable parameters.

We evaluate the model on various tasks with demanding temporal structures, including the Long Range Arena (LRA) datasets, as well as a novel neuromorphic dataset based on the Spiking Heidelberg Digits dataset (SHD-Adding). The ELM neuron reliably outperforms the classic Transformer or Chrono-LSTM architectures on these tasks, even solving the Pathfinder-X task with over 70% accuracy (16k context length).

Upcoming Workshops

No workshops are currently scheduled. Check back soon for new events!

Are you an expert in a neuromorphic topic? We invite you to share your knowledge with our community. Hosting a workshop is a great way to engage with peers and share your work.

Inspired? Share your work.

Share your expertise with the community by speaking at a workshop, student talk, or hacking hour. It’s a great way to get feedback and help others learn.